“Alexa, sing me a song.”

The Amazon echo is but one of several voice-powered devices that has been gaining wide customer appeal over the past year. During the holiday season, Alexa responds with a Christmas Carol. Imagine though if Alexa could respond with a song or playlist that corresponded with your mood just based on the tone of your voice.

Enter Beyond Verbal, a company going beyond data analytics to Emotions Analytics: “by decoding human vocal intonations into their underlying emotions in real-time, Emotions Analytics enables voice-powered devices, apps and solutions to interact with us on a human level, just as humans do.”[1]

How does it work? Beyond Verbal’s software examines 90 different markets in the voice that according to the company, can show loneliness, anger, and even love. The software simply needs a device with a microphone and then measures attributes including, Valence, Arousal, Temper, Mood groups. To date, the software has analyzed 2.3 million recorded voices, across 170 countries and included 21 years of research.

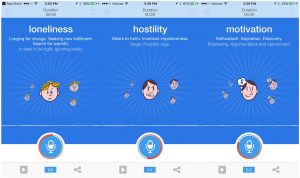

Recently, the company launched a free mobile phone app – Moodies. The app runs continuously and supplies a new emotion analysis every 15-20 seconds. I tested it out while researching for this post and was informed that I was exhibiting feelings of loneliness and seeking new fulfillment, searching warmth. Amusingly, it also stated that I exhibited signs of needing to be right and was ignoring reality — this was shortly after I said that I was skeptical. In a 2-3 minute span, the app proceeded to flash through the following assessments of my speech:

In terms of commercial use cases, the simple Alexa example above is one of many. Others have proposed its use in customer service call centers, executive coaching sessions, and as of late, even to diagnose disease! In fact, the Beyond Verbal team collaborated with the Mayo Clinic to analyze the speech of patients who had coronary artery disease and blocked arteries against those who were healthy to find that there was a distinct factor in the voice that was associated with the likelihood of heart disease! The research analyzed the voices of 88 patients, an additional 9 undergoing other tests, and 21 healthy people. The distinct identified voice factor was found when an individual was 19X more likely to have heart disease. [2][3]

The company has also identified another use case that will help hospitals, health/fitness app developers. They’re hoping to assist individuals understand how changes in a person’s environment may impact a patient. “We could see that people were tired in the morning, became more enthusiastic, active and creative throughout the day, but that their anger levels spiked around lunchtime,” says CEO Yuval Mor. [4] One of Beyond Verbal’s recent funders – Winnovation – mentioned that they could see smart devices continuously monitoring a person’s voice and sending alerts or making emergency calls if a medical problem requiring immediate attention occurred.

Competitors in this emotional analytics space include Swiss startup nViso SA, that developed a prediction process for patients needing tracheal intubation using facial muscle detection and Receptiviti, a Canadian startup that uses linguistics to predict emotions. [5]

Suggestions/Improvements:

A more forgiving industry – Voice recognition has come a long way since the first generation of Siri on Apple and even now, voice recognition is far from perfect. Assessing intonations in voice and attributing them to emotion is an even more herculean task. It’s interesting that the Company has jumped immediately to application in the healthcare space (recall the collaboration with the Mayo Clinic above), an area that arguably demand a high level of diagnostic accuracy. A more forgiving industry might be a good place to start commercializing the technology.

Accuracy across cultures and limited applicability for non-English speakers – As of now, it isn’t clear how the software accounts for cultural nuances in communication. Presumably testing on other languages will help some of this, but research should also be done on English-speakers from a variety of backgrounds and geographies as well. The company has begun to do research and run test on mandarin-speaking persons. Testing on other languages and different cultures will be important to expand the potential user base.

Mechanism for feedback on Moodies – While the mobile app is a step in the right direction to collecting more data points to confirm its research and algorithm, the app currently isn’t optimize for improvements or further learning. Users can’t confirm nor deny the accuracy of the predicted emotions. Moodies also doesn’t allow users to enter in their environmental conditions or what activity they are performing. If the goal is to eventually also use the data to see how changes in a person’s environment affects mood, a way to collect this data through the app ought to exist.

Privacy and Personal Data – Another area for concern and improvement is clarity around privacy concerns, particularly if Beyond Verbal intends to target solutions in the healthcare space. According to Matthew Celuszak, CEO of CrowdEmotion, a competitor to Beyond Verbal “in most countries, emotions are or will be treated as personal data and it is illegal to capture these without notification and/or consent.” Consent is only one area of concern as the laws and regulations around storage and use of personal data create additional burdens to companies. [5]

- http://www.beyondverbal.com

- http://www.beyondverbal.com/can-your-voice-tell-people-you-are-sick/

- http://www.beyondverbal.com/beyond-verbal-wins-frost-sullivans-visionary-innovation-leadership-award-using-vocal-biomarkers-to-detect-health-conditions-using-tone-of-voice-2/

- https://blogs.wsj.com/venturecapital/2014/09/18/beyond-verbal-raises-3-3-million-to-read-emotions-in-a-speakers-voice/

- http://www.nanalyze.com/2017/04/artificial-intelligence-emotions/

- https://thenextweb.com/apps/2014/01/23/beyond-verbal-releases-moodies-standalone-ios-app/#

- http://www.mobihealthnews.com/content/emotion-detecting-voice-analytics-company-beyond-verbal-raises-33m

- https://techcrunch.com/2016/10/17/science-and-technology-will-make-mental-and-emotional-wellbeing-scalable-accessible-and-cheap/

8|