By Rob Mitchum // October 1, 2014

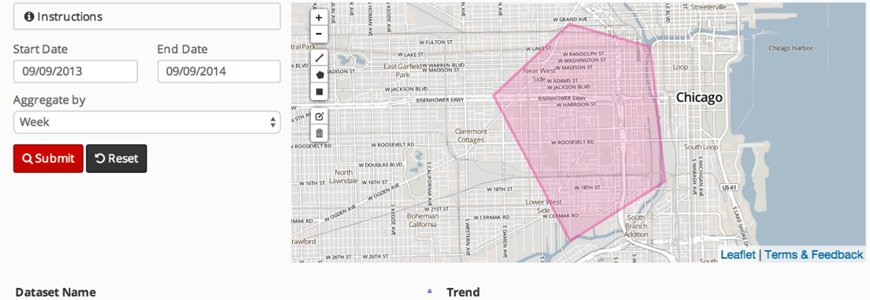

Last week, the Urban Center for Computation and Dataunveiled the alpha version of Plenario, a new online portal for accessing, combining, visualizing, and downloading datasets from cities, states, and other sources. With an emphasis on uniting datasets through their space and time coordinates, the platform makes it much easier for researchers, government analysts, journalists, developers and other users to choose an area of interest and view all the data available at that location.

Last week, the debut of Plenario was celebrated at the Code For America Summit in San Francisco, where UrbanCCD fellow Brett Goldstein discussed the origins of Plenario and demonstrated how the platform can help people who work with city data get past the spreadsheet barrier.

The project was also covered by Chicago Magazine’s Whet Moser, who highlighted the effectiveness of Plenario on solving a particular Chicago problem — the legendarily hazy boundaries of neighborhoods, and their lack of alignment with the “community areas” used in many city datasets.

Going back almost 20 years to Citizen ICAM, the city’s long been on the forefront of collecting data about itself and offering it up to the public to parse. The city’s Data Portal is a great example—immense amounts of data freely available in useful forms. But it still has its limitations. Chicago’s a city of neighborhoods, but the data about itself usually comes sliced by community area, ward, police beat, and so forth. Much of it is geolocated, but pulling out that data to reflect the city as it’s lived by its residents, instead of as divided by sociologists many decades ago, is time-consuming.

Moser demonstrates how Plenario gets around that problem — and its potential for data journalism and community usage — with examples looking at crime and business licenses in Ukranian Village, Logan Square, and Humboldt Park.

Another story, by WBEZ’s Chris Hagan, talked to Goldstein while en route to his Code for America talk about his ambitions for Plenario: “I don’t want data to be hard to use. I want people to focus on the information. By bringing it together, making it easy and doing the hard work on the underbelly, I hope we’ve done this.” You can listen to the radio segment below, and also read a full transcript of the interview.