- What are some of the specifications of the Stone Edge CCD, camera, and/or telescope?

- What filters does Stone Edge use?

- What is Stone Edge’s magnitude limit?

- What tools do I need to get started with Stone Edge?

- How can I download my images?

- What do I do if the telescope pointing is off?

- Most objects are imaged using the g, r, i, u, and z filters. How can I create a color image using more than 3 three filters?

- I know how to take darks. Where or how can I acquire bias and flat frames? How and why do I use them?

- When imaging a specific object, should I use different exposure times for different filters?

What are some of the specifications of the Stone Edge CCD, camera, and/or telescope?

The telescope is a 20 inch Ritchey-Chretien manufactured by RC Optical. Some typical specs include:

- Primary diameter: 20 inches

- Focal ratio: f8.1

- Focal length: 4115mm

- Scale: 50.1 arc-seconds/mm

Stone Edge uses a CCD FLI Proline PL230 camera with an e2v CCD 230-42 chip. Some quick specs include (the rest are in the links):

- Field of View: ~ 26′ x 26′

- Pixel Scale (bin = 1): ~ 0.76″/pix

- Array Size: 2048 x 2048 pixels

- Pixel Size: 15 microns

At times a CMOS camera is used, an SBIG Aluma AC4040. This camera is usually used in low / high gain mode.

- Field of View: ~ 32′ x 32′

- Pixel Scale: ~ 0.47″/pix

- Array Size: 4096 x 4096 pixels

- Pixel Size: 9 microns

What filters does Stone Edge use?

We have a Apogee AFW-50-7S filter wheel and use the same filter set as used in Sloan Digital Sky survey, bought from Asahi.

These are five 50x50mm square filters 5 mm thick. There is also a narrow (12nm FWHM) band H-alpha filter.

+----------+---------+------+--------------+ | FilterID | Name | Slot | Description | +----------+---------+------+--------------+ | 1 | OIII. | 1 | - | | 2 | g-band | 2 | AST0536 A133 | | 3 | r-band | 3 | AST0537 A134 | | 4 | i-band | 4 | AST0538 A135 | | 5 | SIII | 5 | - | | 6 | clear | 6 | ESCO Q320188 | | 7 | h-alpha | 7 | ZBPA660 | +----------+---------+------+--------------+

Other filters at SEO are z-band (AST0539 A136) and u-band (AST0532 A132) but those are currently not installed.

What is Stone Edge’s magnitude limit?

Typically this depends on how long you expose your images for, the magnitude of the object, and the surface brightness of the object. Your intuition for this will get better with practice. Some reference points: a good exposure for the ring nebula (M57) with a magnitude of 8.8 is 120 seconds, and for the dumbbell nebula (M27) with a magnitude of 7.5 is 60 seconds.

What tools do I need to get started with Stone Edge?

Try downloading these programs if you can! Let us know if you need help

- Stone Edge Tunnel : Tunnel to enter SEO GUI

- http://localhost:8080/StoneEdge3/ : Webpage with which to access GUI

- A key to the tunnel : Email us for a key if you would like to use SEO

- Practice Tunnel : Practice tunnel if you want to try it out before night time

- Stellarium : Easily find out what is observable in Sonoma, California (SEO site)

- TopCat : Easily process data tables and make graphs from them

- DS9 : More advanced image visualization program

- AstroImageProcessor : For making quick color images of your objects. Look for a tutorial here.

Some addition tools are Astrometrica and APT Aperture Photometry Tool

How can I download my images?

If you took images using the GUI, you can download the images at https://stars.uchicago.edu/filemanager/. If you need the username and password, email us and we will give it to you.

What do I do if the telescope pointing is off?

If the object is in the field of view but off center, you can estimate how many degrees you need to offset the image by downloading it, opening it in SAO DS9, and measuring the distance between the center of the image and your object.

If the object is not in the field of view, download the image and load it into nova.astrometry.net. Astrometry.net will plate solve your image. Open the solved image in SAO DS9, measure the coordinates of the center, and calculate the distance between the center of your image and the coordinates of the object.

To offset:

Using the GUI:

Click on the command tab, and type in “tx offset dec= ra= cos” where offsets units are in degrees

Using the Command Line:

The command for performing an offset is “tx offset dec= ra= cos” and the offsets units are in degrees.

Most objects are imaged using the g, r, i, u, and z filters. How can I create a color image using more than 3 three filters?

You can theoretically make color images from as many bands as you’d like. The “truest” color you will get (by true, I mean what we see with our eyes), is by combining it as RGB (or here g’,r’,i’) and weighting it by how much light the filter is transmitting (i.e. the throughput). It is also easier to do it with 3 bands because a lot of programs have a 3-color combination written in (e.g. ds9). But ultimately, making color images isn’t a scientific process, its more of an artistic one. That means you can take any and as many bands as you’d like and put them anywhere on the visible spectrum to create a color image. This can get complicated because you have to do it manually, but you could do it with image processing programs like Photoshop.

I know how to take darks. Where or how can I acquire bias and flat frames? How and why do I use them?

You can take biases yourself—they are just 0.1 second darks. They are basically super short closed-shutter exposures and measure the intrinsic response of each pixel, or the “zero-level” of the CCD. You subtract the bias frames to standardize the output.

Flats are taken automatically by the telescope. You can find them on the stars server in the file manager. Flats are used to measure the optical imperfections across the image and variations in pixel-to-pixel sensitivity. Usually you have dust spots on the lens, and the light isn’t distributed evenly across the image (usually there’s vignetting). You can correct for this by taking the mode pixel value of the flat, normalizing to the mode (i.e. most pixel values should be 1), and dividing your galaxy/object image by the normalized flat.

When imaging a specific object, should I use different exposure times for different filters?

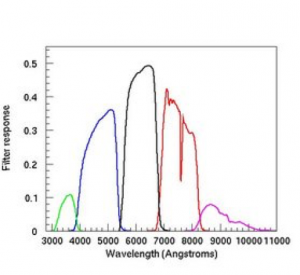

This usually depends on the throughput, i.e. how much light is allowed to pass through a filter. Here are the throughput curves (they are in u’,g’,’r’,I’,z’ order). For example, you can see that, u’ lets in a lot less light than r’, so the exposure should be way bigger.

You must be logged in to post a comment.